Versions Compared

Key

- This line was added.

- This line was removed.

- Formatting was changed.

Meeting Minutes and Project Files

Our Publication References

Vladimir A. Makarov, Terry Stouch, Brandon Allgood, Chris D. Willis, Nick Lynch, "Best practices for artificial intelligence in life sciences research", Drug Discovery Today, Volume 26, Issue 5, 2021, Pages 1107-1110, ISSN 1359-6446, https://doi.org/10.1016/j.drudis.2021.01.017. Free pre-print available at https://osf.io/eqm9j/

Abstract: We describe 11 best practices for the successful use of artificial intelligence and machine learning in pharmaceutical and biotechnology research at the data, technology and organizational management levels.

Summary of Our Paper in Drug Discovery Today ("The 11 Commandments")

- DS is not enough: domain knowledge is a must

- Quality data (…and metadata) à quality models

- Long-term data management methodology, e.g. FAIR for life cycle planning for scientific data

- Publish model code, and testing and training data, sufficient for reproduction of research work, along with model results

- Use model management system

- Use ML methods fit for problem class

- Manage executive expectations

- Educate your colleagues – leaders in particular

- AI models + humans-in-the-loop = “AI-in-the-loop” (Chas Nelson invented the term)

- Experiment and fail fast if needed. A bad ML model that is quickly deemed worthless is better than a deceptive model

- Maintain an Innovation Center for moonshot-type technology programs (this COE is an example of one)

Walsh, I., Fishman, D., Garcia-Gasulla, D. et al. "DOME: recommendations for supervised machine learning validation in biology", Nature Methods 18, 1122–1127 (2021). https://doi.org/10.1038/s41592-021-01205-4

Abstract: DOME is a set of community-wide recommendations for reporting supervised machine learning–based analyses applied to biological studies. Broad adoption of these recommendations will help improve machine learning assessment and reproducibility.

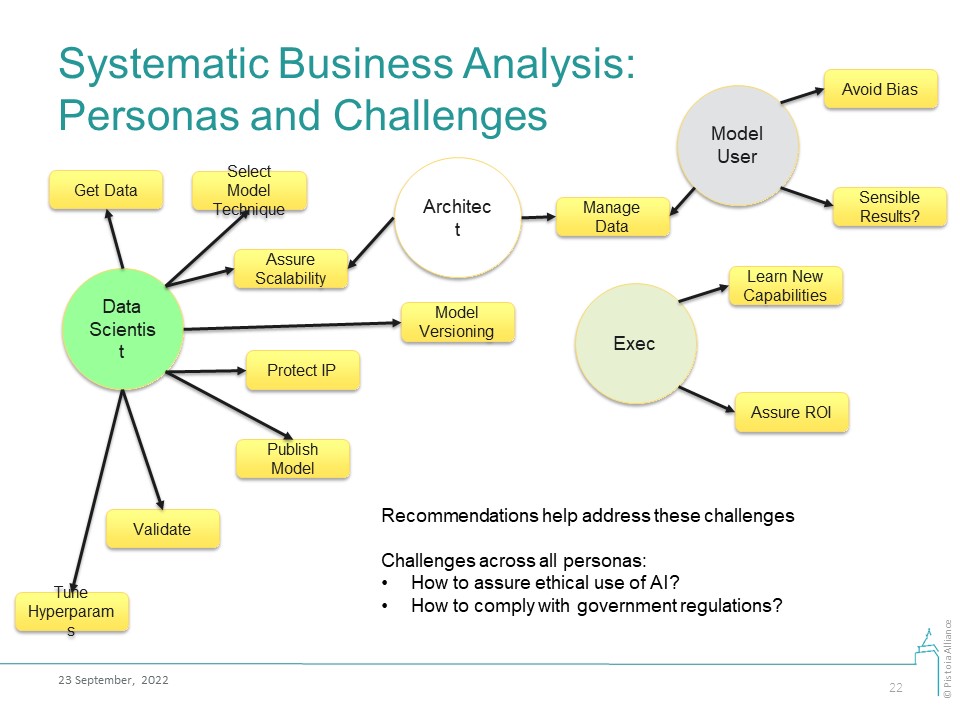

Business Analysis

Our business analysis is based on enumeration of business personas and the challenges that these personas may face in their work with AI/ML technologies. The proposed Good Machine Learning Practices (GMLPs) answer these challenges.

Persona | Challenges | GMLPs that address these challenges | Author(s) | Reviewer(s) |

Data Scientist | DS1: How do I pick a suitable data modeling technique? | 1. Make recommendations for methods suitable for specific problem classes; or for auto-ML systems 2. DS should learn the application domain and/or work with domain experts | ||

DS2: How do I store and version a model? | 1. Recommend model versioning systems 2. Refer to best practices in ML Model Registration, Deployment, Monitoring 3. Best practices and regulatory standards in requirements management and model provenance | Christophe Chabbert | ||

| Samuel Ramirez | |||

1. Refer to the DOME recommendations 2. Quickly fail models that are bad. A deceptive model is worse than a bad one | Fotis Psomopoulos | |||

Elina Koletou | ||||

1. Recommend best practices from the FAIR Toolkit 2. Evaluate data for “fit for purpose”, in particular, for the metadata quality 3. Refer to best practices in Exploration, Cleaning, Normalizing, Feature Engineering, Scaling | Natalja Kurbatova, Christophe Chabbert, Berenice Wulbrecht | |||

Christophe Chabbert | ||||

DS8: How do I publish AI models? | Publish model code, and testing and training data, sufficient for reproduction of research work, along with model results. Make recommendations for an appropriate minimal information model | Fotis Psomopoulos | ||

DS9: How to protect IP? | ||||

DS10: How should the applicability domain of the model be expressed? | Use Applicability Domain Analysis | Samuel Ramirez | ||

Model User | MS1: How should I manage the data? Includes data protection, versioning, labeling | 1. Recommend best practices from the FAIR Toolkit 2. Evaluate data for “fit for purpose”, in particular, for the metadata quality 3. Refer to best practices in Exploration, Cleaning, Normalizing, Feature Engineering, Scaling | Natalja Kurbatova, Christophe Chabbert, Berenice Wulbrecht | |

MS2: How do I avoid bias? | ||||

MS3: How do I make sure the model produces sensible answers? | 1. Set-up a “human-in-the-loop” system. Recommend tools for this, if they exist 2. Set-up business feedback mechanism for flagging model results that do not align with expectations | Chas Nelson | ||

Architect | Christophe Chabbert | |||

See above for Model User | Christophe Chabbert | |||

1. Refer to best practices in DevOps 2. Automated model packaging for ease of production delivery | Elina Koletou | |||

Elina Koletou | ||||

Executive | E1: How do I learn about the costs and benefits of AI/ML technologies and the limits of possible? | Make recommendations for conferences, training materials, education products, review papers, and books. These must be updated on a frequent cadence | ||

E2: How do I value and justify investments in AI? | 1. Make recommendations for technology valuation methodologies 2. Provide business questions, goals, KPIs 3. Continuously review model performance against the business needs (goals, KPIs) | Prashant Natarajan | ||

E3: How do I enable technology adoption? | 1. Bring expertise together to assess compatibility of new technology with business architecture 2. Ensure sufficient knowledge transfer so users are comfortable with adoption and use the new approach 3. Have a sustainability plan so resources are available when needed | Prashant Natarajan |

Common challenges across ALL personas:

- How do I develop and use AI/ML models in an ethical manner (privacy protection, ethical use, full disclosure, etc)?

- How do I develop and use AI/ML models in compliance with the government regulations?

Common responsibility across multiple personas:

- Model improvement is a shared responsibility between the Model User, Architect, and Data Scientist personas.

Glossary notes: In the context of this document these terms have these meanings:

- Performance - metrics used to evaluate model quality in terms of sensitivity and specificity, AOC, or similar metrics, or execution speed.

- Validation - test to confirm performance of the model (as defined above); but should not be confused with “validation” in regulated systems.

Project members:

Fotis | Psomopoulos | CERTH |

Brandon | Allgood | Valo Health |

Christophe | Chabbert | Roche |

Adrian | Schreyer | Exscientia |

Elina | Koletou | Roche |

Frederik | van der Broek | Elsevier |

David | Wöhlert | Elsevier |

John | Overington | Exscientia |

Loganathan | Kumarasamy | Zifo R&D |

Neal | Dunkinson | Scibite |

Simon | Thornber | GSK |

Irene | Pak | BMS |

Berenice | Wullbrecht | Ontoforce |

Prashant | Natarajan | H2O.ai |

Valerie | Morel | Ontoforce |

Yvonna | Li | Roche |

Silvio | Tosatto | Unipd.it |

Natalja | Kurbatova | Zifo R&D |

Mufis | Thalath | Zifo R&D |

Niels | Van Beuningen | Vivenics |

Ton | Van Daelen | BIOVIA (3ds) |

Lucille | Valentine | Gliff.ai |

Adrian | Fowkes | Lhasa |

Chas | Nelson | Gliff.ai |

Paolo | Simeone | Ontoforce |

| Christoph | Berns | Bayer |

| Mark | Earll | Syngenta |

Vladimir | Makarov | Pistoia Alliance |

Useful Links

EU Artificial Intelligence Act: https://www.consilium.europa.eu/en/press/press-releases/2022/12/06/artificial-intelligence-act-council-calls-for-promoting-safe-ai-that-respects-fundamental-rights/

US FDA SaMD Action Plan (September 2022): https://www.fda.gov/medical-devices/software-medical-device-samd/artificial-intelligence-and-machine-learning-software-medical-device

- UK MHRA Software as a Medical Device: https://www.gov.uk/government/publications/software-and-ai-as-a-medical-device-change-programme/software-and-ai-as-a-medical-device-change-programme-roadmap

Related topic at the Pistoia Alliance, a FAIR Guide for Clinical Data: FAIR4Clin

- The proof of the pudding: in praise of a culture of real-world validation for medical artificial intelligence

- The need to separate the wheat from the chaff in medical informatics: Introducing a comprehensive checklist for the (self)-assessment of medical AI studies

- MLflow

- SAS Model Manager

- Promoting the Use of Trustworthy Artificial Intelligence in the Federal Government. Executive Order 13960 of December 3, 2020

- https://www.fda.gov/science-research/science-and-research-special-topics/artificial-intelligence-and-machine-learning-aiml-drug-development